It is not difficult to find ridiculously precise recommendations for the serving temperatures of different types of wine. I don’t worry too much: white wine from the fridge is a little cool but warms up quickly enough, and the same goes for red wine from the cellar (but red wine at room temperature is better if you put it in the fridge for an hour). But I was wondering the other day how long it would take me to cool a bottle of white wine for guests, so I asked google and found this article, which says:

Fridge

In the fridge, it took 2.5 hours for red wine to reach its ideal temperature of 55° and 3 hours for white wine to reach its ideal temperature of 45°.Freezer

In the freezer, it took 40 minutes for red wine to reach its ideal temperature and 1 hour for white wine to reach its ideal temperature.

Which was a bit irritating because it didn’t give the room temperature, fridge temperature, or freezer temperature. And that big difference in temperature for an extra half hour in the fridge seemed fishy. More on that later.

For Science!

Not trusting this article, and not finding solace in the millions of “real-world” experiments about Newton’s Law of Cooling you can find in course websites (is the data really real?), I decided to conduct an experiment of my own. So I bought one of these.

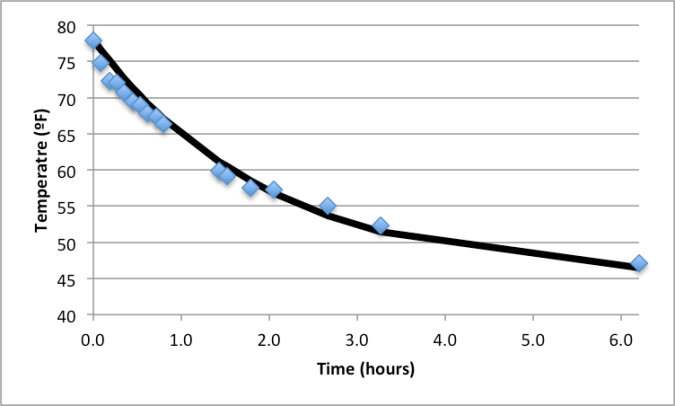

I took a bottle of white wine at 79ºF (which doesn’t bother me for everyday drinking wines because of these experiments) and put it in the fridge at 45ºF (yes, I know, have to do something about that). I measured the temperature at intervals over the next 6 hours. (Not regular intervals, because I have work to do.) The blue dots are the data points and the black line is the graph of the solution to Newton’s Law of Cooling, which says that the difference between the bottle temperature and the fridge temperature decreases by the same factor every hour. I used a factor of 0.6 (that is, the difference at the end of the hour is 0.6 what it was at the beginning), which seems to fit the data pretty well . No, I did not do a logarithmic regression, I just fiddled with the parameters in a graphing utility (damnit, Jim, I’m a mathematician, not a scientist!). (Or is that joke better the other way around?)

Then I tried to make sense of the article. Let’s say the fridge temperature was 35ºF. That extra half hour for white wine over red wine halved the difference between bottle and fridge from 20 to 10. So, in an hour, the temperature decreases by a factor of 0.25. This doesn’t agree with my 0.6, and it also doesn’t make sense, because it would suggest that the white wine was at a temperature of 675º when it was put in the fridge. And I couldn’t fix this by making different reasonable assumptions. After fooling around a bit, I found that all the numbers fit with my factor of 0.6 if you assume that the room temperature was 75ºF, the fridge temperature was 37ºF, the freezer temperature was 0ºF, and the 2.5 is a typo for 1.5.

So, a good rule of thumb is that the temperature difference halves every hour, plus a bit. This should also work for when you want a wine to warm up a little.

What about the heat conductance of the bottle? i.e. did you check that the wine was at the same temperature as the botttle?

LikeLike

No, I guess I should do that. I have a sensorist bottle probe I could use.

LikeLike

I just went out and played with the infrared thermometer on the bottle that has the probe in it. The readings are the same to within measurement error of the infrared thermometer. Consecutive readings from it can vary by a few tenths of a degree.

That’s at steady state, of course. When things are heating up (e.g. because the cooling unit has shut down) I’ve noticed the temperature in the bottle lags the ambient temperature by a degree or so.

LikeLike